#linux

Fail2ban jail to mitigate DoS attacks against Apache

Recently, one of our shared hosting webservers at Onlime GmbH got hit by a DoS attack. The attacker started a larger vulnerability scan against common Wordpress security issues. We already had common brute-force attack patterns on Wordpress covered by a custom Fail2Ban jail, which mainly trapped POST requests to xmlrpc.php or wp-login.php (the usual dumb WP brute-force attacks...). But this DoS attack had hundreds of customer sites as target and did not get trapped by our existing rules.

After having blocked the attacker's IP (glad this was no large-scale DDoS!), I wrote an extra Fail2Ban jail which traps such simple DoS attacks. It's a very basic Fail2Ban jail that should cover common attacks and should not cause any false positives as it is only getting triggered by a large amount of failed GET requests.

GitLab PostgreSQL Data Recovery

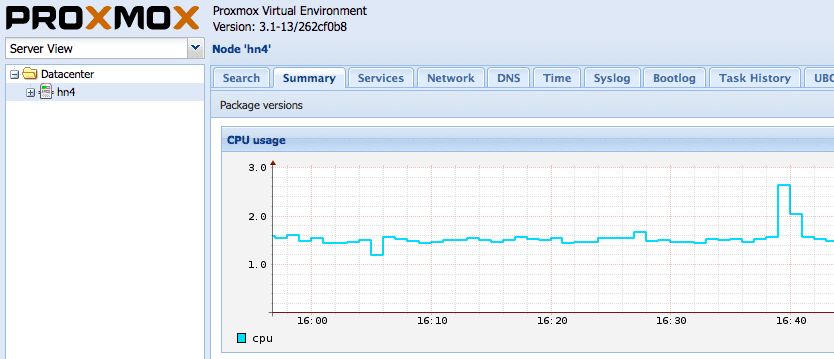

Today, shit happened on a larger on-premise GitLab EE instance of one of our Onlime GmbH customers. GitLab's production.log started to fill up with PG::Error (FATAL: the database system is in recovery mode) errors which were somehow related to LFS operations. That definitely didn't sound cool and smelled like data corruption. The customer noticed it by failed CI jobs with 500 Internal Server Errors, and let me know immediately.

As we have that GitLab server running in a LXC container on a ZFS based system (Proxmox VE), it was easy to pull a clone of the full system and play around with PostgreSQL data recovery before working on live data. I decided to go for a full data restore by dumping and loading it from scratch in a freshly initialized PostgreSQL data dir.

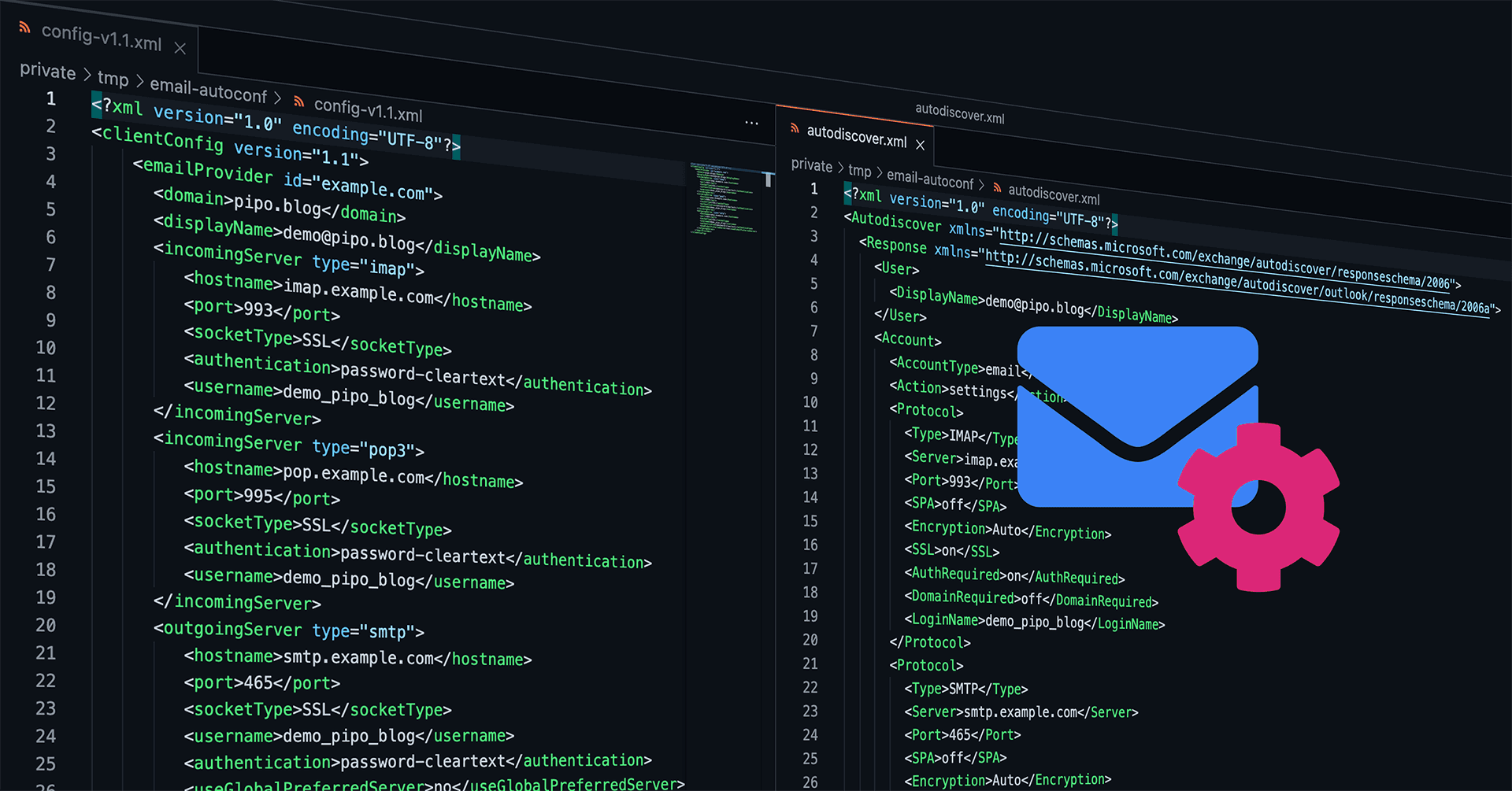

Automated Mail Client Configuration with email-autoconf

How nice would it be, if every email client could be auto-configured and the user would just need to enter his credentials? Entering his email address plus password, hit enter, done. How come nowadays people still need to care about incoming IMAP and outgoing SMTP servers, encryption (SSL/TLS vs. STARTTLS protocols), ports (for a regular end user this is just a number without any meaning, right?), and maybe even wondering what was the username if that differs from the actual email address?

You may tell me: Hey, we're in 2021, email is so old-school! Or you may tell me: Oh, don't you know there is a thing called auto-configuration? – Unfortunately, both statements are wrong. Email today is still widely used for serious communication (and yes, you're right, it should have been replaced long time ago by something better! But it didn't.), and there is such a thing called auto-configuration, but there is just no real standardized protocol that works for every mail client out there. There was no real development in that field during the last 10+ years, sadly.

So, let me present that small Python project Martin and I have been developing for Onlime GmbH back in Feb 2020 and which I have now finally published on GitLab.com: onlime/email-autoconf

GitLab CI/CD of a Nuxt.js frontend over SSH/rsync

Continuous deployment is great and a must for every modern web app. Forget about the times when you had to constantly log into your production server over SSH to run some git pull based deployment and cumbersome and error-prone build tasks, or building your project locally and then deploying it with rsync to production, which is not that sexy either. It is all doable and scriptable, but we want to have the whole process automated without any manual work involved. I much prefer to use GitLab CI/CD over GitHub Actions - ok, mainly just because I am more into it and prefer to run a self-hosted GitLab instance. GitLab just gets the job done very well!

We want the whole process to be straightforward without any fancy extras. It should be a matter of 15mins to set it up on every new project and I don't like to introduce any extra dependencies. I am just talking about deployment of a static site / SPA, a Vue.js based frontend that is generated by Nuxt.js. So let's keep it simple here! I am going to present you the solution I am using to deploy this TechBlog.

Cleanup helper script to destroy old ZFS snapshots

For years now, I am using this little helper script written in Python, which solves a very basic task: Destroy legacy ZFS snapshots. We all know ZFS snapshots are so lightweight and we tend to create so many snapshots, either manually (e.g. before a major system upgrade) or automated (e.g. by our replication or backup jobs). It can somtimes be cumbersome to destroy legacy ZFS snapshots that are no longer needed.

So, here comes help with zfs-destroy-snapshots.py which you could deploy to /usr/local/sbin to have that handy script ready. I know, this is like a really small snipped I could just post to Github Gist (ok, here you go: onlime/zfs-destroy-snapshots.py) or Pastebin, but I think it still deserves a full blown blog post.

Restrict commands by SSH authorized_keys command option

SSH authorized_keys allows you to define a command which is executed upon authentication with a specific key by prefixing it with the command="cmd" option.

authorized_keys man page explains this wonderful feature as follows:

Secure External Backup with ZFS Native Encryption

Let's improve our Simple and Secure External Backup solution I have published back in 2018. Back then, I was using rsync over SSH to pull backup data, and LUKS encryption as full disk encryption for the external drives. As we all know, transferring data with rsync can get horribly slow and blow up your I/O if you're transferring millions of small files. Also, LUKS encryption may be a bit low level and inflexible. What we want to accomplish: A performant and secure backup solution based on ZFS, using zfs send|recv for efficient data transfer, and ZFS native encryption to secure our external drives. So let's go ahead and built that thing from scratch on a fresh 2021 stack!

MySQL FEDERATED Storage Engine and Replication

Back in Nov 2020, it got time to rethink the MySQL replication infrastructure for Airpane Controlpanel, our customer dashboard at Onlime GmbH. The whole application runs on a separate server, but an extract of mail account information (mail account credentials, mail mappings/forwardings) needs to get replicated to our 3 mail servers (mailsrv, mx1, mx2). At that time, I was using MySQL MASTER-SLAVE replication for a single database in a 4-node setup (1 master + 3 slaves).

For security reasons, I no longer wanted any mail server to have access to the binlog of the server that hosted our controlpanel (even though I already had that limited to a single database with mailserver related data only). I also wanted to reduce complexity a bit, just using MySQL replication for the 3 mail servers and propagating mailsrv to master.

The mailserver data is extracted from our controlpanel database by MySQL triggers (mainly AFTER INSERT, AFTER UPDATE, and BEFORE DELETE triggers) into a separate database mailsync. How to get whole mailsync data stored on the remote server mailsrv without using MySQL replication? I didn't want to care about this on application level.

That's where MySQL FEDERATED Storage Engine comes into play!

Fail2ban persistent banning

If you are using Fail2ban, there is no standard recommended way to persistently ban IPs. Some people recommend to do this outside of Fail2ban, using e.g. iptables-persistent, which is actually super easy to install and configure. But let's say, we don't want to install any extras and want to accomplish the same with Fail2ban, as we already have fail2ban on every single host (which is a must!).

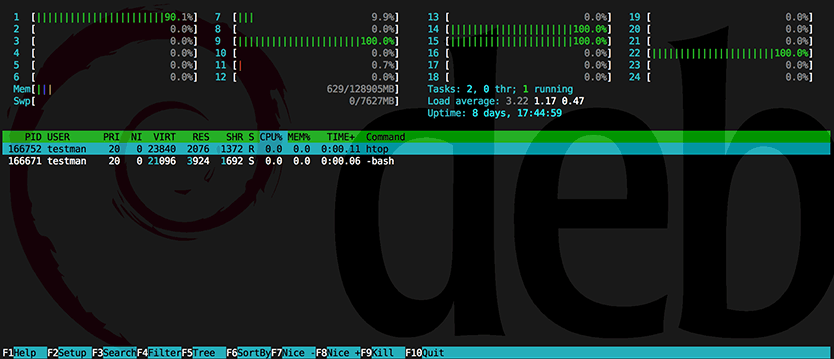

Process hiding in LXC using hidepid capabilities of procfs

Back in 2013, I wrote about Linux process hiding using hidepid capabilities of procfs. On shared webhosting servers at Onlime GmbH, I have used the hidepid=2 mount option for procfs (/proc filesystem) for improved security. Like this, a regular system user (which could potentially be an evil customer that has gained SSH access and tries to spy on other's processes) does only see his own processes, all other processes are hidden.

This is great and super simple to enable, as it is part of the official Linux kernel for quite a while now. But things start to get a little trickier when we try to set up hidepid procfs mount option inside an LXC container. Enabling the mount option on the host system will not do! Inside an LXC container, a regular system user is still able to see all processes. Before LXC 2.1 (released in Sept 2017), this was also quite doable, as we just had to create a new AppArmor profile on the host system to allow the LXC container to set the /proc mount options. But since LXC 2.1 it got super tricky. I will present both solutions below, in case you have struggled with this hard one in newer LXC versions.

Simple and Secure External Backup

What we are going to set up here is a simple and secure offsite and offline backup server. Let's assume you already have an existing backup server that is connected to the internet 24/7 and does daily/weekly/monthly backups. We would now like to set up a second offsite backup server that just cares about storing data to encrypted external drive and after each backup run, you are going to physically detach that drive.

So, we are talking about offline backups in addition to the fact having this server offsite - at a different location than your main backup server.

Preferably, your main backup server would also be offsite. But as it needs to pull data frequently, its storage is always available and not getting detached.

Let's call your main backup server backup and the one we are going to set up here extbackup.

Proxmox VE 4.x OpenVZ to LXC Migration

At Onlime Webhosting we chose ProxmoxVE as our favorite virtualization platform and are running a bunch of OpenVZ containers for many years now, with almost zero issues. We very much welcome the small overhead and simplicity of container based virtualization and wouldn’t want to move to anything else. ProxmoxVE added ZFS support by integrating ZFSonLinux back in Feb 2015 with the great ProxmoxVE 3.4 release – which actually would have deserved to bump its major version because of this killer feature.

Install Composer with Ansible, the lean way

Every PHP developer needs Composer and as a webhosting company at Onlime GmbH, sure we had to provide Composer binary to every customer, deploying it to every webserver. But how come the recommended Composer installation for Linux/Unix/macOS is so clunky, only providing the latest composer.phar through an installer?

Sure, installers are fine, but not for a sysadmin who likes to keep things simple and fully manage his infrastructure with Ansible. Installing Composer should be nothing more than deploying the latest composer.phar, period. But the author of Composer somehow forgot to provide us a download URL for the latest stable version. (Sorry Jordi Boggiano, don't want to blame you - maybe I just overlooked it and should have asked you via DM. But writing that small Ansible playbook was still faster than looking any further.)

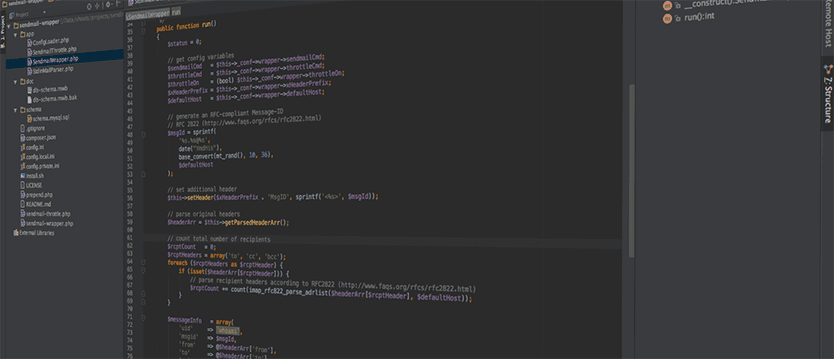

Sendmail-Wrapper for PHP

Years ago I wrote about the extensive sendmail wrapper with sender throttling, a pretty simple Perl script. It reliably provided throttling of the email volume per day by the sender’s original UID (user id). It also logged the pathes of scripts that sent emails directly via sendmail (e.g. via PHP’s mail() function). The main flaw in the original sendmail wrapper was security, though. As in Linux, every executable script must be readable by the user that calls it, the throttle table in MySQL was basically open and every customer could manipulate it. Every customer could raise his own throttling limit and circumvent it.

Today, I’m publishing my new sendmail-wrapper that is going to fix all the flaws of the previous version and add some nice extras. The new sendmail-wrapper is written entirely in PHP and does not require any external libraries. It is a complete rewrite and has pretty much nothing in common with the old Perl version.

Webapp-Scanner

Webhosting customers are messies, at least some of them - or (sadly, that's the truth) the bigger part of them. Some people still think they can run the same blog software or CMS for years without ever caring about upgrading. I tell my customers over and over how important it is, to keep their website up-to-date and don't let any outdated code lying around. Still, as long as their website doesn't get hacked or defaced, they don't really seem to care.

If you're in the same situation as me and you are providing webhosting services to your friends or customers, read on.

Process hiding - hidepid capabilities of procfs

Five years ago I wrote about kernel based process hiding in Linux (see my old blog posts: Simple process hiding kernel patch, Process hiding Kernel patch for 2.6.24.x, RSBAC – Kernel based process hiding). It got time to continue the story and finally present you a real solution without the hassle of a self-compiled kernel.

How can I prevent users from seeing processes that do not belong to them?

In January 2012, Vasiliy Kulikov came up with a kernel patch that solved the problem nicely by adding a hidepid mount option for procfs. The patch landed in Linux kernel 3.3.

In the meantime, this patch luckily also landed in the 3.2 kernel of Debian Wheezy (see backport request in Debian bug report #669028). This feature has been also pushed back into the kernel of Red Hat Enterprise Linux 6.3 (see RHEL 6.3 Release Notes), and from there to CentOS 6.3 and Scientific Linux 6.3. Recently, this feature was even backported to the 2.6.18 kernel in RHEL 5.9.

Proxmox VE Restricting Web UI access

With the release of Proxmox VE 3.0 back in May 2013, the Proxmox VE web interface does no longer require Apache. Instead, they're using now a new event driven API server called pveproxy. That was actually a great step ahead, as we all know Apache get's bulkier every day and the new pveproxy is a much more lightweight solution. But the question arose: How do I protect my Proxmox VE WebUI with basic user authentication?

Basically, we do not trust any web application out there so we better double protect the whole WebUI with plain old basic auth - previously done in Apache by .htaccess.